Hi everyone,

I wanted to share a tool I’ve been building for myself over the past few months.

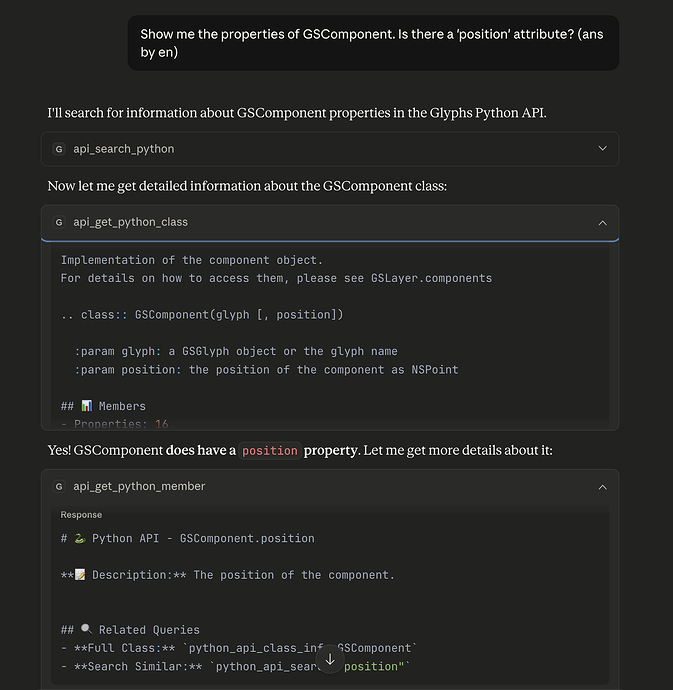

Like many of you, I use AI (Claude) to help with scripting. But I got really tired of it “hallucinating” methods that don’t exist or guessing wrong parameter names. It was often more frustrating than helpful.

So, I decided to fix it properly. I built a custom MCP (Model Context Protocol) server called glyphs-info-mcp.

What it actually does: Instead of just pasting code snippets into the chat, this tool gives Claude direct access to the “ground truth” of Glyphs.

-

It checks the API: Before writing code, it looks up the actual Python/ObjC documentation.

-

It reads the Handbook: I can ask it “How do I set up corner components?” and it fetches the relevant chapter.

-

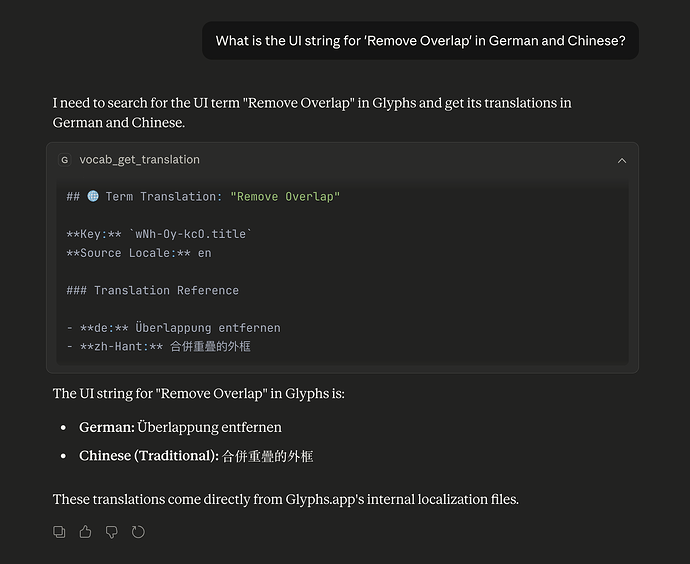

It knows the UI terms (The fun part): I ended up writing a parser that reads the

.stringsfiles directly from the Glyphs app bundle. So it knows exactly what “Remove Overlap” is called in Chinese, German, or any other language.

I’ve been using this daily to rewrite my plugins, and it has saved me a ton of time digging through docs.

I thought it might be useful for other scripters here, so I cleaned up the code and put it on PyPI today.

How to try it: If you use uv (which I highly recommend) and a client like Claude Desktop:

uvx glyphs-info-mcp

The source code is on my GitHub:

It’s open-source (v1.0.0), so feel free to poke around or let me know if you find any bugs.